Step away for a day and half and look at all that I missed. Since my last post in the thread I went to a Cheer competition for my daughter, got rained out trying to hunt some Coyotes and since the hunting got rained out I did my taxes. None-the-less... and hopefully not to far where the thread has gone...

In a post a bit earlier I talked about having some physical basis for the equation you select when you are trying to fit a function to a data set, Such as in this case were we are trying to create a conversion from one measurement to another that are clearly derived from the same physical input but are not the same thing. I am putting forward that you can't just pick a random equation simple because it gives you a good R^2 value and assume it is the correct equation for the data. Ideally you have some physics based principles to help guide the selection of the equations to be fitted to the data. ie my examples early like knowing that the signal strength fades with the inverse square law and thus trying to fit another equation to the data is going to lead to a bad function, even if you end up with a decent R^2.

In an attempt to illustrate that, I started playing in Excel doing some trendline fits using the internal functions in the graph function. Then I started manually doing the fits and manually calculating the R^2 in the spreadsheet to try to refresh my mind about where that all comes from. I have never had a formal statics class just what I picked up in various engineering classes along the way and a two or three day class on Minitab a few years ago. So after some playing I had a system in Excel that would let me create a custom equation and use Excel's internal Solver to implement a least squares regression to solve for the equation's coefficient and the resulting R^2. Next I created a data set for my example. It is completely made up from a simple functions meant to represent time on the x-axis and the y-axis represents some 1-dimensional displacement. No units required just a simple 1-dimensional displacement over time function. I then added a bit of noise to the data set, to simulate a sensor. The noise is random caped at +/- 2% of full scale, in this case a random number between .46 and -.46 since ful scale was ~23. (units and scale are arbitrary) This made up function is sampled at 10 hz and then each sample has a random amount of noise added to make a noisy data set.

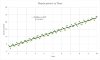

The first thing I did to the data set was throw it into a graph and let Excel add a simple linear fit (Click the Spoiler tag for the graph). Excel fit the equation y=a*x+b using its internal regression to solve for a and b. The equation and R^2 values are on the graph and it looks like a pretty good fit. There is definitely a linear correlation between time and displacement and the equation looks like it describes that pretty well bases on the R^2. But the calculated error between the linear equation and the actual data has some pretty bad local error especially at the low end. The average absolute error is 7.2% with an Min/Max of -26% to +58%. That seem considerably higher than we would expect from our +/-2% sensor/noise.

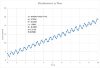

But now a little bit more information about the system. The position sensor is actually measuring the position of one mass of a mass-spring-mass system that is freely sliding down our 1-dimensional world with no gravity. (we are ignoring friction etc for simplicity's sake). With this addition insight into the physics involved, a better equation can be selected. Clearly there is a linear aspect to the motion (supported by the initial linear regression) but since there is a mass-spring-mass aspect to the system a harmonic component to the motion is likely. This lead to the creation of an equation based on what is know about the physics of the system. Something like this. y = a*x + b + c*sin(d*x+e). I used my least square setu and the internal solver to find the values for a, b, c, d, e. and plotted that. Click the spoiler

Now that looks much better. Having some physical intuition into what was creating the data helped create a function that fit the data much much better even though the R^2 value is only marginally better. The error in this new equation results in an average absolute error of only 2.3% and a min/max of -11% and +10%.

So coming back to the original topic. The above long winded illustration is a very simple case of what I think we have with the CUP to PSI conversion. There is a lot of really complex physics going in both measurement systems to arrive at a single number. They are both clearly correlate as you would expect since they are both derived from the same physical input (ie the the time variant chamber pressure) but there is enough physics/mechanics going on between the gas pressure event and the resulting CUP or transducer measurement (especially the CUP measurement) that writing a meaningful equation that can capture those physics is not trivial, if even possible, especially when you only use the two single number measurements and none of the other data available (bore diameter, case volume etc). The complexity of the measurement systems is why I talked about adding more inputs to the system to try to capture some more information that might help achieve a better fit to the conversion equation we want. As

@JohnKSa talks about earlier that Mr Ohler used the entire pressure vs time curve from a transducer measurement and could arrive at the pretty accurate CUP prediction. That makes total sense you could basically simulate a CUP measurement based on the representation of the entire pressure curved captured by the transducer. A finite element model, assuming you have a good finite element model for plastic deformation of copper (not trivial but get easier and easier with advances in FEA software) could take the pressure vs time curve as input and replicate the CUP measurement in a 3D computer model. The converse is not true of the CUP measurement. Too much data is lost in the CUP measurement to take the CUP measurement and use it to create a simulation of what the transducer would see. All the temporal data is lost in the CUP measurement, its all mushed together (pun intended) into one value. It's not that the two measurement are not related or correlated its that the relations between those two measurement is so complex that a simple polynomial fit simple cannot capture the complexity of that relationship especially in light of how much information is lost in the CUP measurement.

-too much rambling, hope that helps more than it confuses. Hopefully there are not too many typos and bad grammar as my brain is fried on rain, taxes, and least square regressions.

**This was a really simple and slightly contrived example and even then I had to start with pretty good guesses at my values for a, b, c, d, e. With an equation this complex there were lots of local minimums in the solution manifold that would get my solver stuck and come up with some strange solutions. Occasionally it would basically turn off the harmonic part of the equation and end up with an equation very similar to the linear solution. And just for completeness the original equation used to create the clean data set was y = 2*x + 3+ 1*sin(2.1 * (2 * pi) + 135*(pi/180)). The (2 * pi) and the (pi/180) is simple converting the frequency and phase angle to radians that Excel's sin function requires.